Theres more than just chatgpt and American data center/llm companies. Theres openAI, google and meta (american), mistral (French), alibaba and deepseek (china). Many more smaller companies that either make their own models or further finetune specialized models from the big ones. Its global competition, all of them occasionally releasing open weights models of different sizes for you to run your own on home consumer computer hardware. Dont like big models from American megacorps that were trained on stolen copyright infringed information? Use ones trained completely on open public domain information.

Your phone can run a 1-4b model, your laptop 4-8b, your desktop with a GPU 12-32b. No data is sent to servers when you self-host. This is also relevant for companies that data kept in house.

Like it or not machine learning models are here to stay. Two big points. One, you can self host open weights models trained on completely public domain knowledge or your own private datasets already. Two, It actually does provide useful functions to home users beyond being a chatbot. People have used machine learning models to make music, generate images/video, integrate home automation like lighting control with tool calling, see images for details including document scanning, boilerplate basic code logic, check for semantic mistakes that regular spell check wont pick up on. In business ‘agenic tool calling’ to integrate models as secretaries is popular. Nft and crypto are truly worthless in practice for anything but grifting with pump n dump and baseless speculative asset gambling. AI can at least make an attempt at a task you give it and either generally succeed or fail at it.

Models around 24-32b range in high quant are reasonably capable of basic information processing task and generally accurate domain knowledge. You can’t treat it like a fact source because theres always a small statistical chance of it being wrong but its OK starting point for researching like Wikipedia.

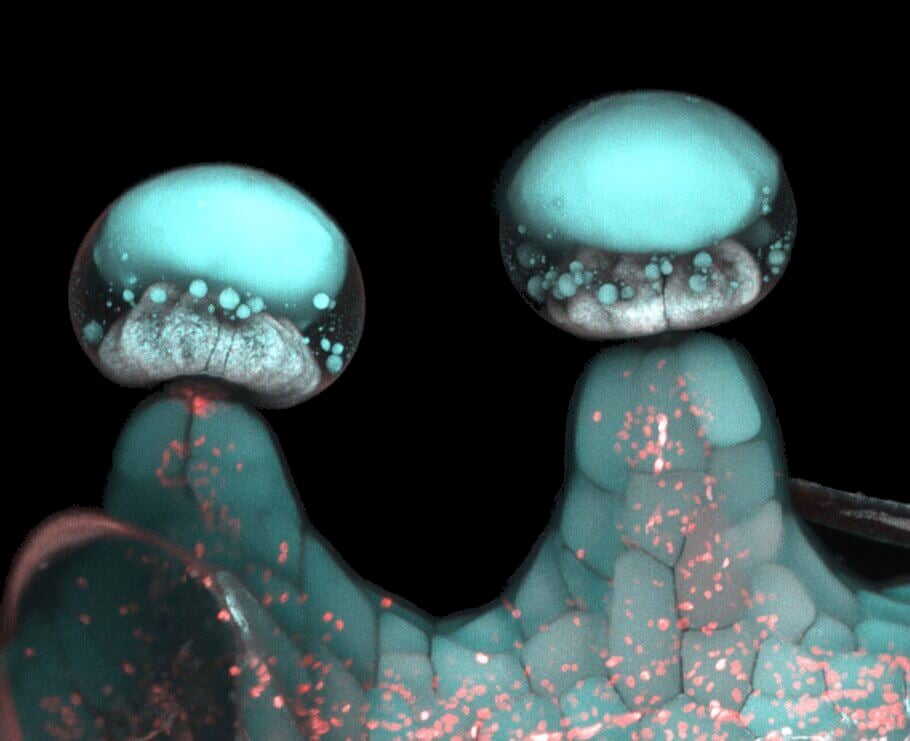

My local colleges are researching multimodal llms recognizing the subtle patterns in billions of cancer cell photos to possibly help doctors better screen patients. I would love a vision model trained on public domain botany pictures that helps recognize poisonous or invasive plants.

The problem is that theres too much energy being spent training them. It takes a lot of energy in compute power to cook a model and further refine it. Its important for researchers to find more efficent ways to make them. Deepseek did this, they found a way to cook their models with way less energy and compute which is part of why that was exciting. Hopefully this energy can also come more from renewable instead of burning fuel.

Most of these companies offer direct web/api access to their own cloud supercomputer datacenter, and All cloud services have some scaling with operation cost. The more users connect and use computer, the better hardware, processing power, and data connection needed to process all the users. Probably the smaller fine tuners like Nous Research that take a pre-cooked and open-licensed model, tweak it with their own dataset, then sell the cloud access at a profit with minimal operating cost, will do best with the scaling. They are also way way cheaper than big model access cost probably for similar reasons. Mistral and deepseek do things to optimize their models for better compute power efficency so they can afford to be cheaper on access.

OpenAI, claude, and google, are very expensive compared to competition and probably still operate at a loss considering compute cost to train the model + cost to maintain web/api hosting cloud datacenters. Its important to note that immediate profit is only one factor here. Many big well financed companies will happily eat the L on operating cost and electrical usage as long as they feel they can solidify their presence in the growing market early on to be a potential monopoly in the coming decades. Control, (social) power, lasting influence, data collection. These are some of the other valuable currencies corporations and governments recognize that they will exchange monetary currency for.

I assume you mean in a tech progression kind of way. A better comparison might be is that its being treated closer to the invention of transistors and computers. Before we could only do information processing with the cold hard certainty of logical bit calculations. We got by quite a while just cooking fancy logical programs to process inputs and outputs. Data communication, vector graphics and digital audio, cryptography, the internet, just about everything today is thanks to the humble transistor and logical gate, and the clever brains that assemble them into functioning tools.

Machine learning models are based on neuron brain structures and biological activation trigger pattern encoding layers. We have found both a way to train trillions of transtistors simulate the basic information pattern organizing systems living beings use, and a point in time which its technialy possible to have the compute available needed to do so. The perceptron was discovered in the 1940s. It took almost a century for computers and ML to catch up to the point of putting theory to practice. We couldn’t create artificial computer brain structures and integrate them into consumer hardware 10 years ago, the only player then was google with their billion dollar datacenter and alphago/deepmind.

Its exciting new toy that people think can either improve their daily life or make them money, so people get carried away and over promise with hype and cram it into everything especially the stuff it makes no sense being in. Thats human nature for you. Only the future will tell whether this new way of precessing information will live up to the expectations of techbros and academics.